Published to clients: January 6, 2026 ID: TBW2123

Published to Readers: January 7, 2026

Public: May 4, 2026

Analyst(s): Dr. Doreen Galli

Photojournalist(s): Dr. Doreen Galli

Abstract:

“This Whisper Report investigates the next data breach our industry isn’t ready to handle. It captures urgent insights from Put Data First revealing how emerging threats are reshaping risk landscapes. These include AI pipeline compromises, indirect prompt injections, company chat exfiltration, and deep fake-driven social engineering. Expert perspectives explain why traditional defenses fail. The report urges proactive strategies to secure data integrity across every stage of AI-driven operations before vulnerabilities escalate.”

Target Audience Titles:

- Chief Executive Officer, Chief Information Officer, Chief Technology Officer, Chief Data Officer, Chief Security Officer, Head of Data Strategy, Head of Information Security

- Director of Cybersecurity, Director of AI Operations, Director of Risk Management, Director Data Governance Manager, Enterprise Architect

- Data Scientist, Machine Learning Engineer, Cybersecurity Analyst, AI Operations Specialist, Risk Analyst, Cloud Security Engineer, Threat Intelligence Analyst

Key Takeaways:

- AI pipelines are vulnerable at every stage, requiring continuous protection of training data and outputs.

- Indirect prompt injections can manipulate AI agents through unvalidated web content, creating hidden security risks.

- Company AI chat data is a high-value target for exfiltration, exposing sensitive organizational insights.

- Deep fakes amplify social engineering attacks, eroding trust and enabling data breaches through deception.

What’s the next data breach our industry isn’t ready to handle?

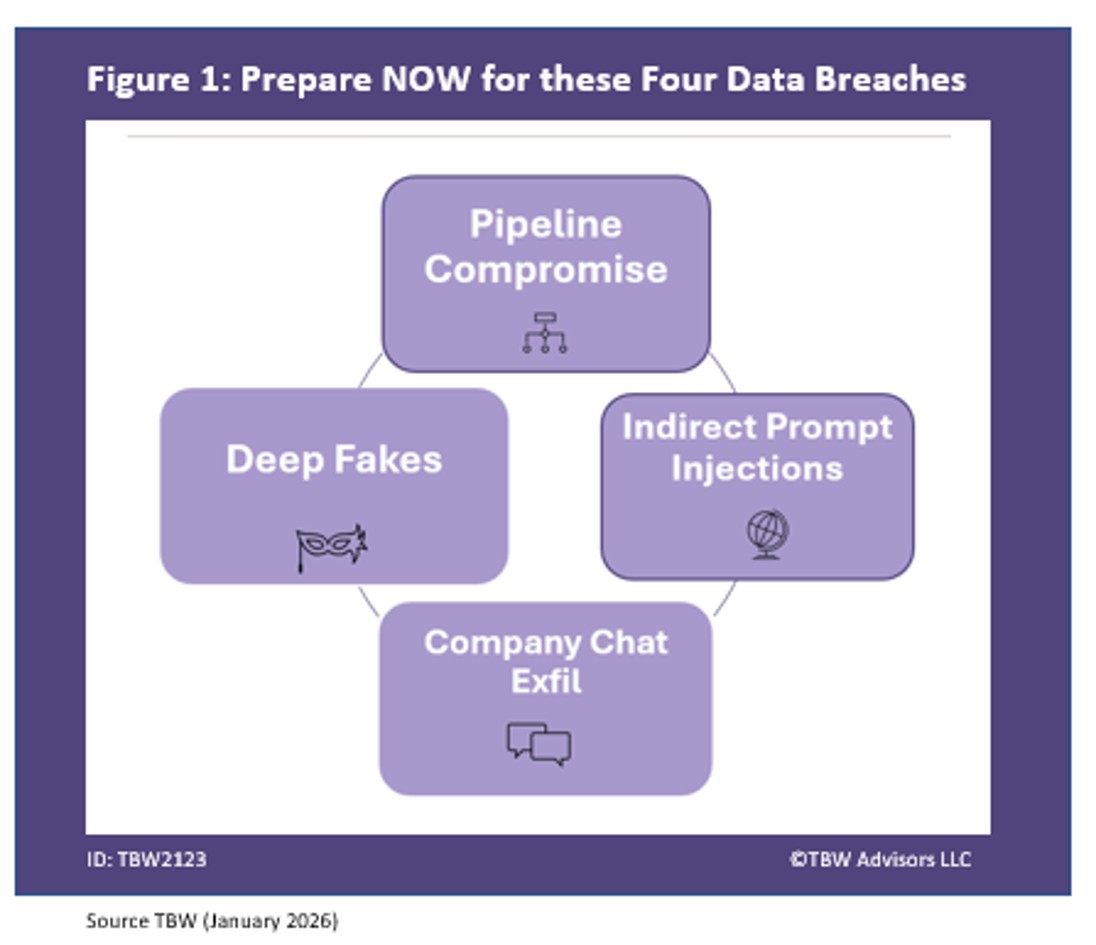

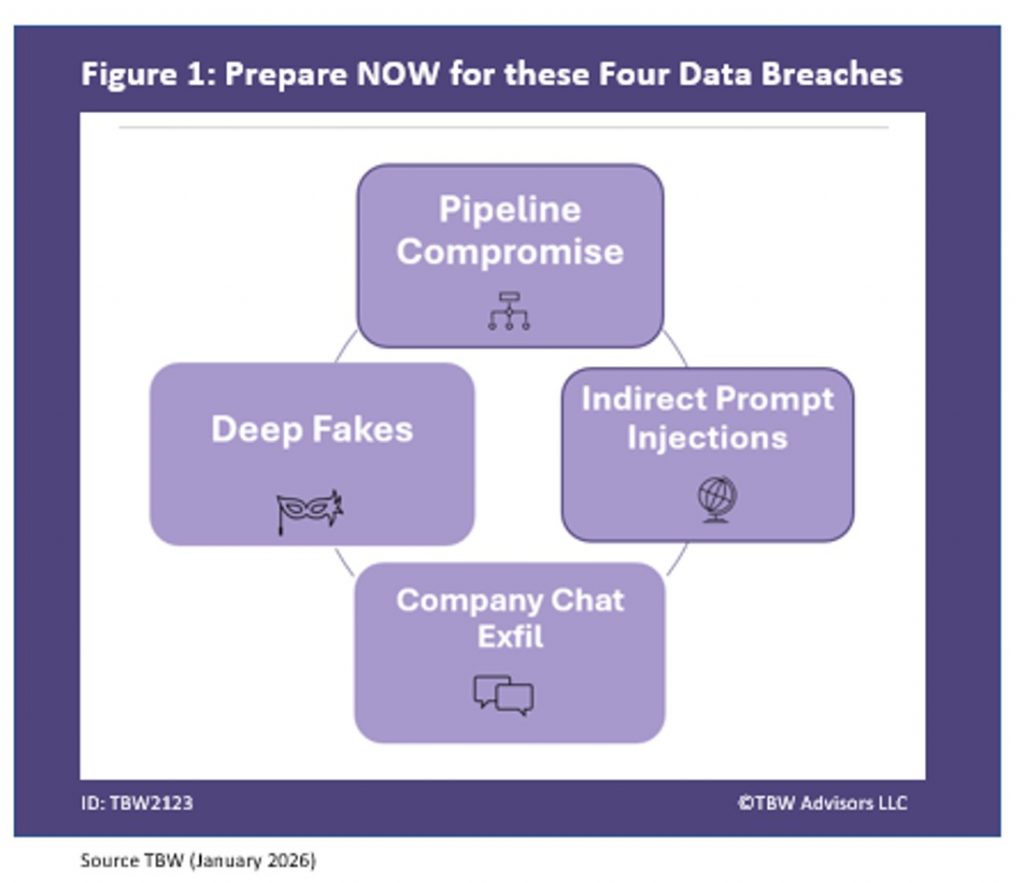

We took the most frequently asked and most urgent technology questions straight to the data and AI experts gathering at the Put Data First’s Inaugural event held at Planet Hollywood in Las Vegas. This Whisper Report addresses the question regarding the biggest AI risk no one in your organization is talking about as depicted in Figure 1.

Figure 1. Prepare NOW for these Four Data Breaches

1. AI Pipeline Compromise

Our first area to defend, was suggested by SafeBreach’s Hudney Piquant. “The AI pipeline I like to call it. It’s the pipeline of the data that you are the training data that you have and then your prompting that you’re doing and then the output like those three things I believe that that’s going to be the biggest breach that the adversaries will be looking at because if you’re able to really manipulate those things it’s going to affect the pipeline from a scalability perspective.” Hudney raises an important point that data needs to be always protected, every step of the way on its journey. For more research on how to protect data during execution see Industry Whispers: Public is Private -Confidential Computing in the Cloud.

2. Indirect Prompt Injections

The next attack vector, brought by Mend.io’s Amit Chita, is subtle and exploits GenAI. “Indirect prompt injections. All the web contains websites. We take AI agents, we connect them to get information from these websites, but we don’t validate that it that this website doesn’t contain prompt injections within them. and they can manipulate our agents as they surf through the web. I think this is going to be one of the major issues that we’re going to deal with in the next coming weeks.” One may want to be careful where you let your agents roam!

3. Company Chat Exfiltration

Our third attack vector is an insider and SaaS risk with significant exposure potential, highlighted by AnswerRocket’s Shanti Greene. “Exfiltrating company AI chats. So, the organizers like Open AI have done a good job of giving you a sandbox for your company to work within and they’re not training on your data. But being able to exfiltrate a company’s specific use and see what they’re prompting with could be interesting. There’s probably some interesting gold in that data.”

4. Deep Fakes

Our final area of concern may not be a direct data breach but rather is a tool frequently leveraged to breach data and trust and is brought to us by The Agentic Manager’s Neil W. Smith. “The implications of deep fakes. We’re already used to AI being used for fishing expeditions, for extracting information from our databases. But what we don’t realize as humans is that we trust other humans to play by the rules more often than not. However, with deep fakes, both voice fakes, visual fakes, and context fakes, I think more and more humans are going to be fooled by the efficacy of deep fakes.” And the more humans that are fooled, the more systems can be compromised. Despite how widely discussed this topic is, deep fakes remain underestimated for their use in fraud and as a social engineering threat.

Related playlists and Publication

- Whisper Report: What’s the biggest AI risk no one in your org is talking about?

- Whisper Report: What’s the next data breach our industry isn’t ready to handle?

- Whisper Report: If you could make one data rule enforceable tomorrow, what is it?

- Whisper Report: What’s the best part about attending Put Data First live in Vegas?

- Industry Whispers: Public is Private -Confidential Computing in the Cloud.

Corporate Headquarters

2884 Grand Helios Way

Henderson, NV 89052

©2019-2026 TBW Advisors LLC. All rights reserved. TBW, Technical Business Whispers, Fact-based research and Advisory, Conference Whispers, Industry Whispers, Email Whispers, The Answer is always in the Whispers, Whisper Reports, Whisper Studies, Whisper Ranking, Whisper Club, Whispers, The Answer is always in the Whispers, Vegas Convention Library, and One Change a Month, are trademarks or registered trademarks of TBW Advisors LLC. This publication may not be reproduced or distributed in any form without TBW’s prior written permission. It consists of the opinions of TBW’s research organization which should not be construed as statements of fact. While the information contained in this publication has been obtained from sources believed to be reliable, TBW disclaims all warranties as to the accuracy, completeness or adequacy of such information. TBW does not provide legal or investment advice and its research should not be construed or used as such. Your access and use of this publication are governed by the TBW Usage Policy. TBW research is produced independently by its research organization without influence or input from a third party. For further information, see Fact-based research publications on our website for more details.